INSIGHTS

Pre-Packaged to Custom: Developing and deploying enterprise generative AI tools

Altman Solon is the largest global TMT strategy consulting firm exclusively dedicated to the Telecommunications, Media, and Technology sectors. The series “Putting Generative AI to Work” examines how generative AI is impacting business. Our team surveyed 292 senior business leaders and spoke to 21 industry experts to understand the adoption of generative AI tools for specific enterprise use cases. This article, the second in our series, explores what enterprise development and deployment of generative AI will look like and how companies prioritize the development of generative AI solutions in the workplace.

One of the most groundbreaking tech advancements in recent years has been the emergence of generative AI applications. A subset of artificial intelligence, generative AI is unique in its ability to generate new content autonomously, including text, images, video, 3D shapes, and more. In the business world, generative AI tools have the potential to improve productivity across numerous business functions, including (but not limited to) software development, marketing, customer service, and product management.

However, enterprises that want to adopt generative AI tools face more complex choices compared to when they adopt well-established enterprise Software-as-a-Service (SaaS) solutions. How a company opts to develop and deploy generative AI tools brings tradeoffs across security, tool customization, licensing fees, deployment costs, scalability, time to deploy, and availability of internal skillsets. Our survey of 292 business leaders revealed the following:

- 70% of enterprises were interested in outsourcing some parts of generative AI app development.

- 48% of enterprises preferred deploying generative AI models on the public cloud of their choice.

- 62% of enterprises rank “customization” as the most important criterion to consider when implementing generative AI tools, followed by 55% citing “licensing and deployment costs.”

Enterprises prefer adopting out-of-the-box generative AI models and public cloud development

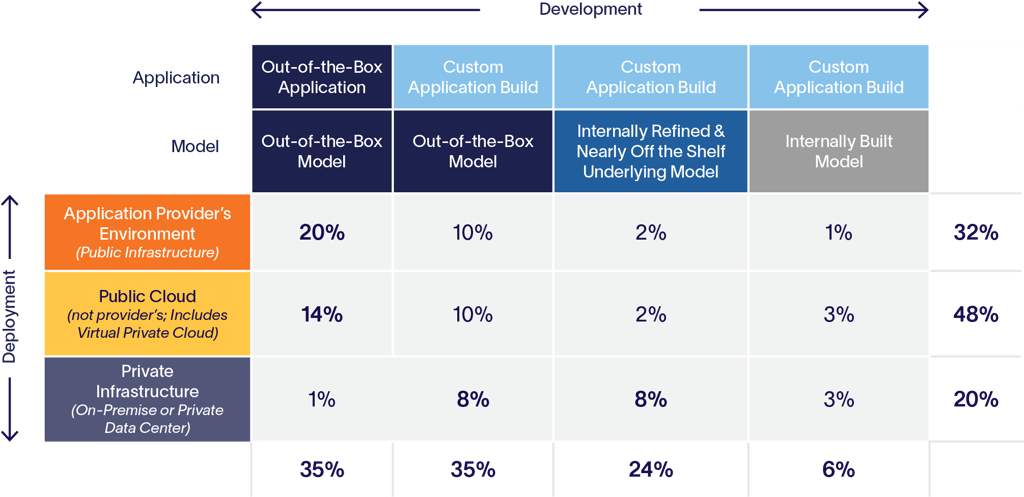

When launching a generative AI application, companies must choose a development type – the application itself and the deep generative model that powers it—and decide how to host and run the application (deployment). Development options range from pre-packaged to wholly customizable to a mixture of both. There are four main typologies:

- Out-of-the-box: The application and the deep generative AI model are pre-packaged and do not require any customization. An example of this is ChatGPT, which sits on Open AI’s deep generative model.

- Custom-built app on an out-of-the-box deployment model: This application is developed for a specific use case and sits on top of an out-of-the-box deep generative model. The app Jasper is designed for the needs of content marketers but sits on top of Open AI’s deep generative model.

- Custom-built app on a fine-tuned model: This custom application sits on a model further developed in-house for specific uses. Platforms like Hugging Face and OpenAI allow any developer to pull together various open-source deep generative models to create a customized model for a specific use case. Over 10,000 organizations use Hugging Face’s library of open-source models to build custom deep generative models.

- Custom-built app on a model built in-house: This custom application sits on a model entirely developed in-house and trained on a mix of public and private data. Organizations that handle sensitive user data, like healthcare organizations, tend to favor this development type.

Current and Intended Development & Deployment of Generative AI

n = 240

Out-of-the-box models require limited internal technical resources and are cheaper than customizing or building an app or model from scratch. The current market for generative AI-powered business tools is relatively small compared to the market for SaaS business applications, so companies have limited options with fully out-of-the-box solutions. Nevertheless, companies surveyed show a marked preference for fully out-of-the-box solutions, or custom apps built on an out-of-the-box model, both appealing to 35% of respondents, respectively. That a combined 70% of respondents prefer out-of-the-box solutions suggests an emerging market in ready-made enterprise-grade generative AI tools. Despite the appeal of out-of-the-box solutions, enterprises can face difficulties adapting the generative AI model to their needs.

‘’In many cases, you can’t use an out-of-the-box API and assume it’ll work well in your environment…in those cases where fine-tuning is necessary, the learning period must be factored in when deploying a model.’’

— Product Security Head, Generative AI Model Provider —

Custom apps built on a fine-tuned model interest 24% of surveyed organizations, and only 6% are interested in custom apps built on an in-house model. Respondents cited the high costs and technical staffing required as deterrents to creating an in-house generative AI model.

In parallel to choosing how to develop a generative AI app, a company must also determine how to host and run this model. These deployment options include:

- The model provider’s cloud environment

- The enterprise user’s public cloud environment

- The enterprise user’s private cloud environment

Nearly half (48%) of surveyed organizations showed a preference to deploy generative AI tools on the public cloud of their choice, independent of the generative AI application provider’s environment. Nearly one-third of organizations (32%) showed interest in deploying generative AI tools on the application provider’s environment. The remaining 20% reported interest in deploying generative AI tools on private infrastructure. As of today, this is a stark difference from SaaS applications; the overwhelming majority are deployed on the provider’s infrastructure.

Companies consider generative AI development and deployment tradeoffs

Companies must decide on generative AI tool development and deployment options in tandem to evaluate each option’s tradeoffs. On the one hand, organizations opting for wholly out-of-the-box solutions (e.g., model and application deployed on the provider’s environment) must consider the security risks of housing data in the provider’s domain. The tradeoff is a cheaper, potentially more scalable solution with lower demand for technical resources. On the other hand, organizations choosing in-house solutions (e.g., fine-tuned or custom-built models) must consider the extent of necessary financial and technological investments in exchange for greater security and customization.

The more customizable the apps and models are, the more resources are required. In the case of fine-tuned or custom-built models, machine learning experts need to train the deep learning model with proprietary data continually.

"We have a big machine learning and DevOps footprint, so deploying generative models is not that challenging for us…if you don’t have the talent to train a model, you’ll use an API."

— Associate Director of Data and Machine Learning —

Custom apps deployed on a provider’s infrastructure carry a security risk, especially when sensitive, private data is involved. Deploying a custom-built app and model on private infrastructure is time-consuming and requires internal technical resources. However, this option offers the highest level of security and is typically suited to regulated industries like healthcare or financial services.

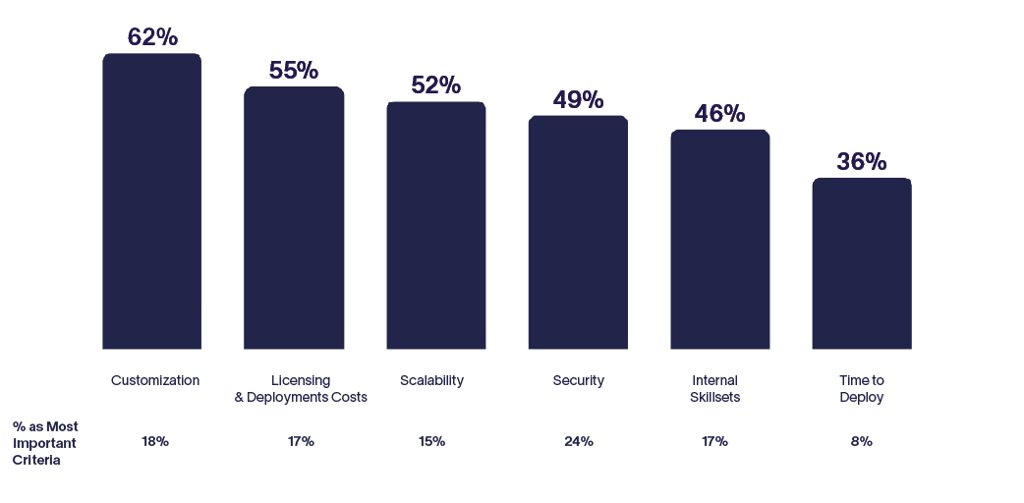

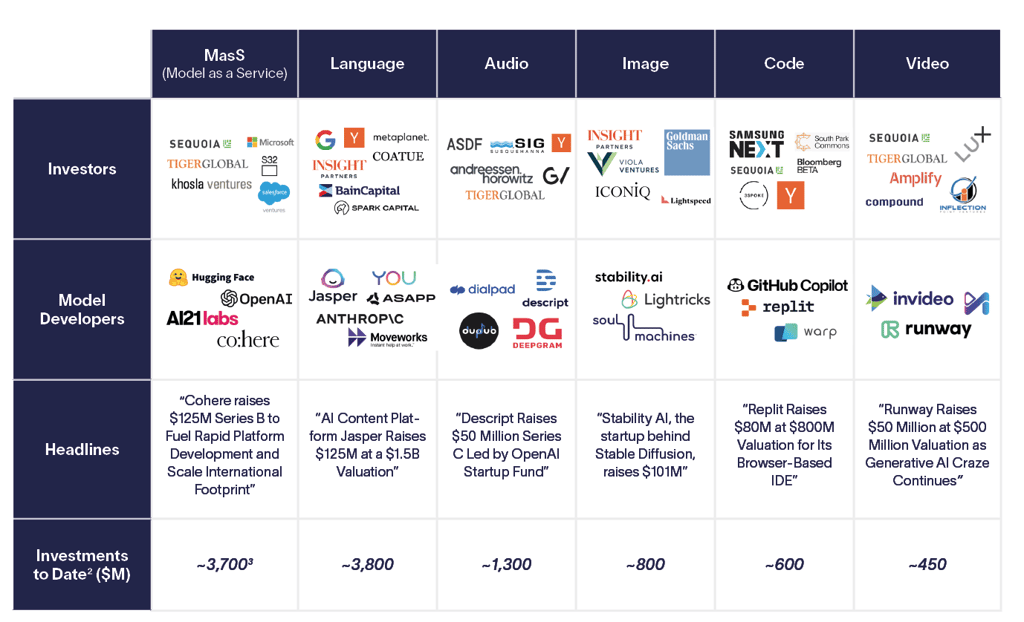

Tool customization and deployment cost move the needle overall, enterprises with sensitive data prioritize security

Organizations surveyed cited customization as the most important criterion (62%), followed by deployment cost (55%) and scalability (52%) when deciding how to develop and deploy generative AI tools. The importance given to customization suggests a potential growth market for Model as a Service (MaaS) platforms like Cohere, Hugging Face, or AI21 Labs, which allow for customized models. Apps tailored to the needs of specific professions, like Nabla’s CoPilot for physicians and Jasper for content marketers, reflect the needs of enterprise users who require generative AI tools for career and business functions.

Although security didn’t make the top three decision criterion, 24% of organizations surveyed listed security as the most important criterion. Respondents who chose security as their deciding factor were more likely than others to deploy with a private infrastructure, suggesting particular attention to safety.

Decision Criteria Importance

n = 240, % of respondents ranking criteria as top 3 most important

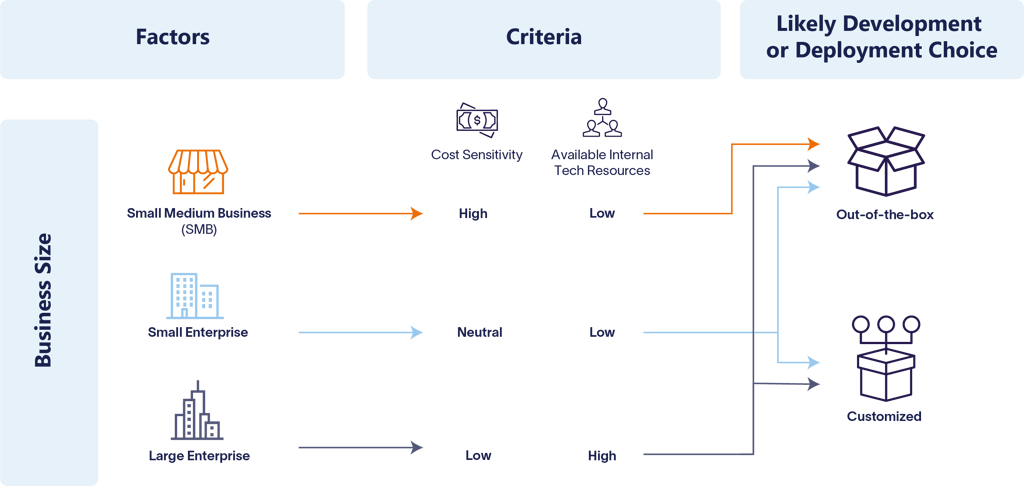

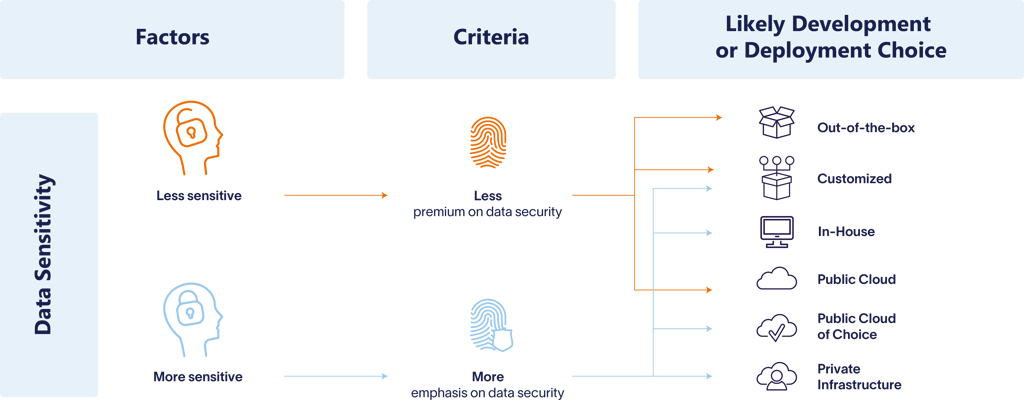

Business size, data sensitivity, and intended audiences influence decision-making

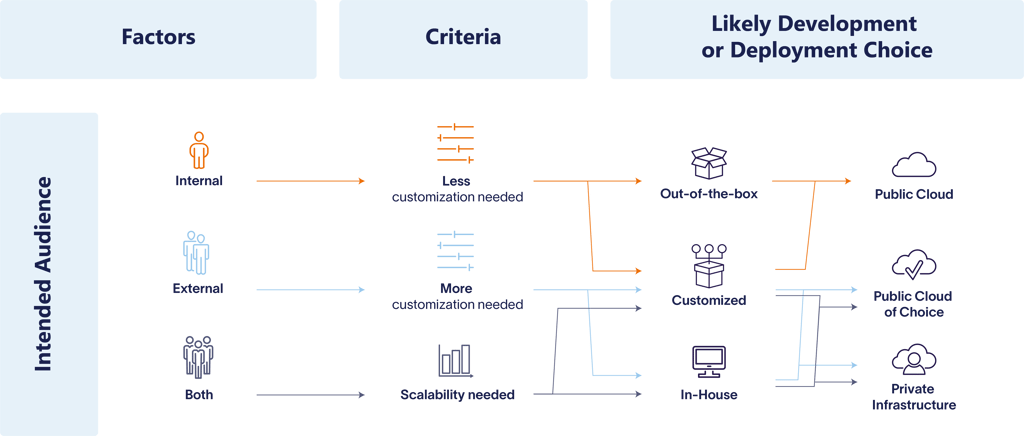

According to our research, priorities for developing and deploying generative AI tools can depend on a combination of factors, including company size, data sensitivity, and the application’s intended audience (internal versus external).

Usually, the size of a business correlates with available in-house technical expertise and funds to invest in generative AI tools. Startups and small to medium-sized businesses (SMBs) are cost-sensitive and lack internal resources to develop and build out customized solutions. Small enterprises trend neutral on costs but may lack in-house technical resources. Finally, large enterprises are less concerned about cost and tend to have a dedicated internal data science or AI team. As such, small businesses are more likely to adopt out-of-the-box options, whereas larger companies are more likely to invest financial and technical resources to adopt customized options.

Regarding data sensitivity, companies in regulated spaces like pharmaceuticals, financial services, insurance, and healthcare, or high-security spaces like government agencies, pay particular attention to data security in development and deployment. Companies in areas with less sensitive data, like technology or media, may still value security but less than in regulated industries. These sectors have more leeway in choosing a generative AI solution.

Finally, the intended audience for an AI tool can determine how a company chooses to develop and deploy a generative AI solution. AI solutions used internally—like tools that provide document summaries, research, or copywriting— don’t require substantial customization or scalability. However, client-facing enterprise AI tools— for example, customer chatbots, retail interfaces, and virtual assistants— correlate with a greater need for customization and scalability. Tools with dual internal and external audiences mean scalability is a top priority.

The incubation of an emerging enterprise tool market

The marketplace for enterprise-level generative AI apps is nascent. However, industry giants, like Microsoft Office are soft-launching Copilot, their enterprise-grade generative AI chatbot1, (equipped with the ability to generate meeting transcripts, summarize emails, and organize meetings) and major VC firms are making substantial generative AI investments. This activity indicates that major players and a new crop of startups are trying to crack the code for generative AI for business. A generation of executives accustomed to “plug and play” SaaS solutions will look for generative AI tools that are customizable, simple to deploy, and (relatively) inexpensive. Generative AI apps can potentially appeal to a wide swath of companies if they’re as easy to incorporate and personalize as SaaS tools. Industries in regulated spaces will be looking for development and deployment methods that ensure the highest level of security for sensitive data.

Today, companies who want to incorporate generative AI in their businesses must put considerable thought into developing and deploying these tools. These choices have implications for infrastructure providers and will increase demand for computing, storage, and networking infrastructure. We’ll delve into this topic in our next installment of "Putting Generative AI to Work."

About the Analysis

In March 2023, Altman Solon analyzed emerging enterprise use cases in generative AI. Leaning on a panel of industry experts and a survey of 292 senior executives, Altman Solon analyzed preferences for developing and deploying enterprise-grade generative AI tools. The survey also looked in-depth at the key deciding factors that go into developing and deploying generative AI tools. All organizations surveyed were from the United States of varying business sizes. Explore additional insights from our “Putting Generative AI to Work” series or read our first article in the series on emerging generative AI use cases for enterprises.

This article was co-written with GPT-4. GPT-4 assisted in brainstorming and simplifying technical terms and concepts.

Complete the form below to receive a PDF of the full Generative AI report and to get first access to our next release of "Putting Generative AI to Work."

Thank you to Elisabeth Sum, Oussama Fadil, Vivian Arsanious, and Joey Zhuang for their contributions to this report.